|

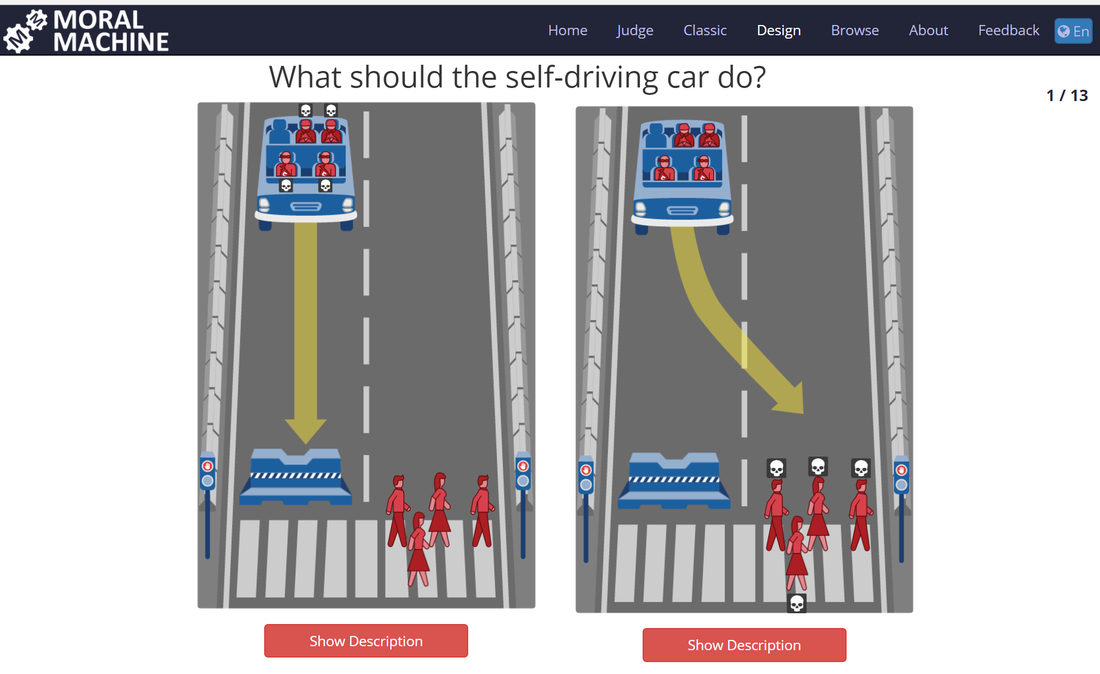

Artificial intelligence (AI) and machine learning (ML) are becoming an infallible part of the development of present-day technology. Yet the public discussion often centers around a notion of a ‘human-like’ robot and my concern is that the impact gets downplayed. How then should we look at the future with AI? One way which may be helpful is examining the aspect of morality and consciousness, or rather, the lack of both in decision-making in a new paradigm. Humans have evolved based on a complex process of long-term trial and error. Our biological machinery has been honed over thousands of years to optimize (mainly) for survival and procreation and for these tasks, the human mind is highly advanced. However, we have to realize that the subjective aims of the human mind are the primary reason and motivation for all decisions made. Morality is a key element in the support of sustaining these two goals of the human species, and has successfully introduced a human-wide consensus to better our chance to achieve our goals. As we look at crafting AI for better data-driven decision making in critical processes – such as optimizing far-reaching and complex value chains, making real-time decisions in applications such as self-driving cars and many more – we should recognize that without coded in morality, AI will actually act without the concept of morality or human subjectivity. In short, it will optimize any and all decisions based on the rational best outcome for the desired parameters rather than what ‘a good person would do.’ A Future with Less Morality May Be One Humans Find Uncomfortable Looking at the grinding, long process of trial & error humans have undergone to evolve, we could expect AI to dwarf this process and undergo a rather rapid process of trial & error. Especially with the concept of connected machines and the vast availability of real-time data, we can expect the process to be fundamentally fast and unlike anything that has ever been seen before. The entire human species has used a moral compass in guiding development and uniting humanity behind a broader direction. For the first time in history, we may have a future chapter of innovation written and executed by a new kind of decision-making and at that, entirely non-human. However, we should not be blind in assuming this future is far off. As authors in Moral Decision Making Frameworks for Artificial Intelligence point out, AI is already used in highly complex ethical fields of decision making such as organ transplants and waiting lists, deciding effectively who lives a little longer and who does not. Yet one does not even have to go to medical fields to find life-altering decisions made by non-humans, where AI is already far-used in determining eligibility for receiving and underwriting credit decisions including loans, which will determine who has an opportunity to access finances for a specific reason and again, who does not. This too has far-reaching consequences. We’re already far along in introducing an objective decision maker into situations that truly matter, yet humans make a vast number of decisions each day with limited information and most importantly, limited objectivity. We shouldn’t write off the human brain however – it is truly a cognitive miracle that derives information from all senses in real time and through conscious and unconscious steering, shapes our thoughts & actions. Yet a lot of human morality centers around the concept of self-protection in complex decision making and a lot of the information accessible to humans, is a fraction of what AI will be able to tap into and process. Well Then – Can We Introduce Human Morality Into Technology? It’s certainly a possibility and one that several leading researchers and authors of our future are pursuing. For example, Future of Life Institute and Duke University have been active in the foray of introducing ethical engines into artificial intelligence and decision making by interesting applications of game theory. Researchers often look at the now-infamous examples such as the self-driving car and an unavoidable pedestrian accident and attempt to find very tangible ways of introducing intended morality in a way that is actionable and clear. It is a complex task which involves classifying actions as morally right or wrong in a universal and generally accepted way. The establishment of a pre-written ‘moral compass’ would allow an element of human decision-making to be codified and carried on into applications that will learn on their own at some point. We can generally think of the concept in different phases:

We can argue that we currently find ourselves in the first phase with the question of whether we’ll ever reach the third phase at all. Morality as a Lens for Future Outlook The future of technology and AI will likely be a series of events, some controlled and pre-planned, and others the result of unintended consequences. Morality will be a key component in determining how the future looks and the exercise of considering the absence of morality may be useful in understanding why the field’s importance is so profound. If we indeed never come to the third phase, we will also be looking at a future derived largely by a presence lacking consciousness. These elements may be impossible to imagine ahead of time, yet their implications are likely to be paramount. Written by Markus Lampinen, CEO of Crowd Valley, Inc. This post originally appeared on Let's Talk Payments. Photo: MIT Moral Machine.

|

AboutEst. 2009 Grow VC Group is building truly global digital businesses. The focus is especially on digitization, data and fintech services. We have very hands-on approach to build businesses and we always want to make them global, scale-up and have the real entrepreneurial spirit. Download

Research Report 1/2018: Distributed Technologies - Changing Finance and the Internet Research Report 1/2017: Machines, Asia And Fintech: Rise of Globalization and Protectionism as a Consequence Fintech Hybrid Finance Whitepaper Fintech And Digital Finance Insight & Vision Whitepaper Learn More About Our Companies: Archives

January 2023

Categories |

RSS Feed

RSS Feed